Walmart In-Store CoPilot

AI shopping assistant that locates items, optimizes shopping lists, and provides real-time store navigation

Walmart In-Store CoPilot - AI shopping assistant in action

Overview

Walmart In-Store CoPilot is an intelligent AI assistant designed to transform the in-store shopping experience at Walmart Supercenters. The application helps customers efficiently navigate large stores, locate items instantly, optimize their shopping routes, and discover meal ideas—all through a conversational interface.

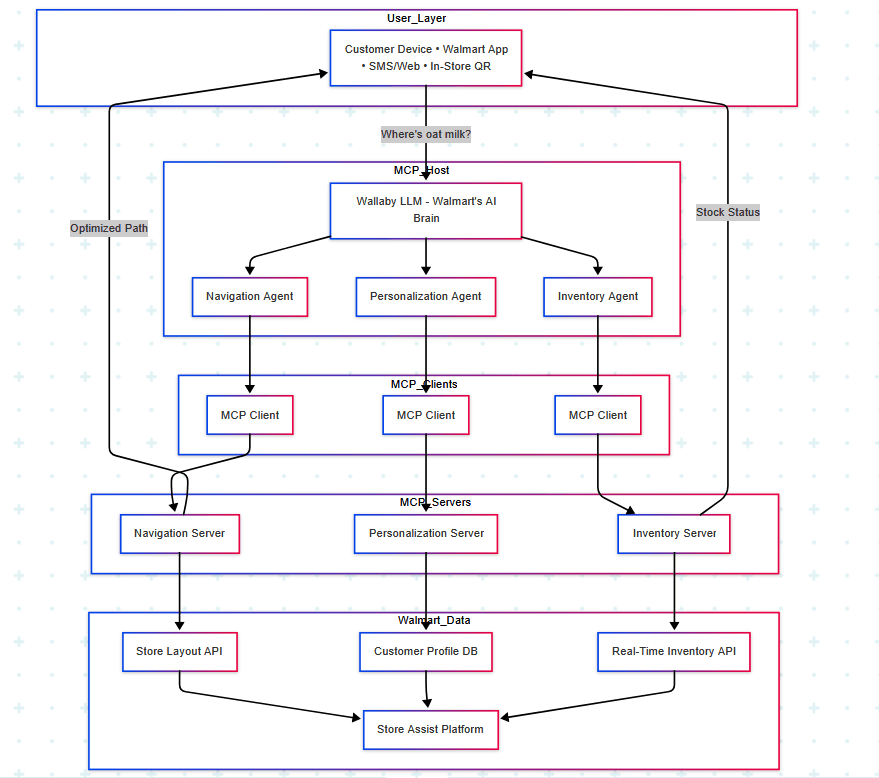

Built with a multi-agent architecture powered by Llama 3.1 and LangChain, the Co-Pilot integrates with real-time store data through the Model Context Protocol (MCP) to provide accurate aisle locations, price checks, stock levels, and personalized recommendations.

The Problem

Shopping in large retail stores like Walmart Supercenters can be overwhelming and time-consuming. Customers face several pain points:

- •Difficulty locating items in massive stores with hundreds of aisles

- •Inefficient shopping paths that waste time zigzagging through the store

- •No real-time stock information leading to wasted trips to empty shelves

- •Limited personalized assistance without having to find store employees

I built the Walmart In-Store CoPilot to solve these challenges by creating an always-available AI shopping companion that makes shopping faster, easier, and more enjoyable.

Architecture & Technical Approach

The Co-Pilot uses a sophisticated multi-agent architecture where specialized AI agents collaborate to handle different aspects of the shopping experience:

Agent System

Navigation Agent

Specializes in providing precise aisle locations and optimal routes through the store. Integrates with the Store Layout API via MCP to access real-time floor plans and calculate efficient shopping paths.

Personalization Agent

Delivers meal suggestions and recipe ideas based on customer preferences and available ingredients. Connects to Customer Profile DB through MCP to provide tailored recommendations.

Inventory Agent

Provides real-time price checks and stock level information. Queries the Real-Time Inventory API via MCP to ensure customers know product availability before heading to an aisle.

Coordinator (Wallaby LLM)

Central orchestrator powered by Llama 3.1 running via Ollama. Routes user queries to appropriate agents and synthesizes responses into natural, conversational answers.

Multi-agent system with MCP integration for real-time store data access

Frontend & Backend

Frontend

Built with HTML5, Tailwind CSS, and JavaScript. Features an interactive store map, conversational chat interface, and shopping list management UI.

Backend

FastAPI server orchestrates agent communication using LangChain framework. fastmcp library connects to MCP servers for store data. Served with Uvicorn ASGI server.

Key Features

📍 Intelligent Item Location

Ask "Where is milk?" and get precise aisle numbers instantly. The Navigation Agent accesses real-time store layouts to provide accurate directions.

📝 Shopping List Optimization

Provide a list of items and the Co-Pilot finds them all, organizing an efficient path through the store to minimize backtracking.

🍳 Meal Suggestions

Get recipe ideas based on items you have or need. The Personalization Agent suggests meals and helps locate all necessary ingredients in the store.

💰 Price & Stock Checks

Quickly check prices and current stock levels of any item before heading to the aisle. Real-time inventory data prevents wasted trips.

🗺️ Interactive Store Map

Visualize item locations on an interactive floor plan with highlighted aisles. Toggle fullscreen for easy navigation while shopping.

💬 Conversational Interface

Natural language powered by Llama 3.1 makes interactions intuitive. Ask questions as you would to a store employee.

Challenges & Solutions

Multi-Agent Coordination

Challenge: Complex queries like "Show me ingredients for pasta and their locations" require multiple agents to work together seamlessly.

Solution: Implemented LangChain agent orchestration with a central coordinator (Wallaby LLM) that routes queries to specialized agents and aggregates responses into coherent answers.

Real-Time Data Integration

Challenge: Accessing live store data (inventory, layouts, customer profiles) from multiple backend systems in real-time.

Solution: Used Model Context Protocol (MCP) with dedicated MCP servers for Navigation, Personalization, and Inventory. The fastmcp library provides efficient client connections from agents to these servers.

Response Latency

Challenge: Customers expect instant responses, but multi-agent systems and LLM inference can introduce latency.

Solution: Optimized with local LLM deployment via Ollama, implemented caching for frequent queries, and used async processing in FastAPI to maintain sub-second response times.

Interactive Map Rendering

Challenge: Displaying complex store layouts with hundreds of aisles while maintaining smooth user interaction.

Solution: Built efficient SVG-based store maps with JavaScript for dynamic aisle highlighting. Used CSS transforms for smooth zoom and pan interactions.

Impact & Results

Key Takeaways

• Agent orchestration is complex but powerful for handling diverse user queries

• Model Context Protocol (MCP) provides clean abstraction for integrating multiple data sources

• Local LLM deployment via Ollama enables fast, cost-effective inference

• Real-world AI systems need efficient caching and async processing for optimal performance